On April 7, 2026, a model nobody had heard of appeared on the Artificial Analysis Video Arena — the internet's blind taste test for AI video. Within hours it sat at #1 in both text-to-video and image-to-video, ahead of Seedance 2.0, Kling 3.0, Veo 3.1, and every other model on the board.

No company name. No paper. No GitHub. Just thousands of users picking it over everything else in side-by-side comparisons.

Three days later, Alibaba claimed ownership. Then the model vanished from the leaderboard.

This is what we know — what HappyHorse is good at, where it falls short, how it compares to everything else, and when you can actually use it.

See It In Action

Before we get into anything else, watch these. All clips are HappyHorse 1.0 outputs pulled from the Artificial Analysis Video Arena, the same blind-test pool that put the model at #1.

The visual quality speaks for itself. Soft volumetric lighting, natural skin tones, film-grade color grading, and character consistency across shots. This is what made blind-test voters consistently choose it over the competition.

What HappyHorse Is Good At

Visual Quality That Looks Like Cinematography

This is where HappyHorse genuinely pulls ahead. The output has a quality that reviewers describe as "film-grade" — not in a marketing sense, but in the way it handles light, texture, and color. Skin looks like skin. Fabric folds naturally. Lighting wraps around subjects with soft volumetric falloff instead of the flat, overlit look most AI video models produce.

Audio and Video Together — No Stitching

Most AI video tools generate video first, then bolt audio on with a separate model. HappyHorse generates both in the same pass. A door closing produces its sound at the exact frame it shuts. Footsteps match walking cadence. It's not perfect, but the synchronization is noticeably better than any pipeline approach.

Prompt Adherence

When you describe camera direction ("slow dolly push-in," "overhead crane shot") or motion specifics ("breeze" vs "strong wind"), HappyHorse follows the instruction more precisely than most models. It distinguishes between nuances that other models flatten into generic motion.

Speed

Full 1080p clips generate in ~38 seconds. Lower-resolution previews come back in about 2 seconds. That's 2–3x faster than most competitors at the same quality level — fast enough to actually iterate on prompts without losing your creative momentum.

Where HappyHorse Falls Short

It's not all perfect. Here's where HappyHorse has clear limitations right now:

- You can't use it yet. The API doesn't launch until April 30. Open-source weights are "coming soon." As of today, there's no way to generate with HappyHorse — you can only watch the demos. Seedance 2.0 and Kling 3.0 are available right now.

- Audio categories are tight, not dominant. In with-audio Arena rankings, HappyHorse leads Seedance 2.0 by only 6 Elo points — basically a tie. The joint audio-video generation is impressive engineering, but the audio quality itself isn't a clear leap over competitors.

- Short clips only. HappyHorse caps at 5–10 second generations. Kling 3.0 supports up to 2 minutes with its Extend feature. For longer content, you'll still need to sequence multiple clips.

- Limited input control. Seedance 2.0 accepts up to 9 reference images, 3 video clips, and 3 audio files in a single generation. HappyHorse supports text and single-image input only. If you need fine-grained reference control, Seedance wins.

- No 4K. HappyHorse outputs 1080p. Kling 3.0 and Veo 3.1 support native 4K. If resolution matters for your use case, this is a gap.

- The leaderboard score needs time. HappyHorse accumulated its Elo in under a week before being pulled. Most models stabilize over weeks or months. The scores are impressive but haven't been stress-tested at scale yet.

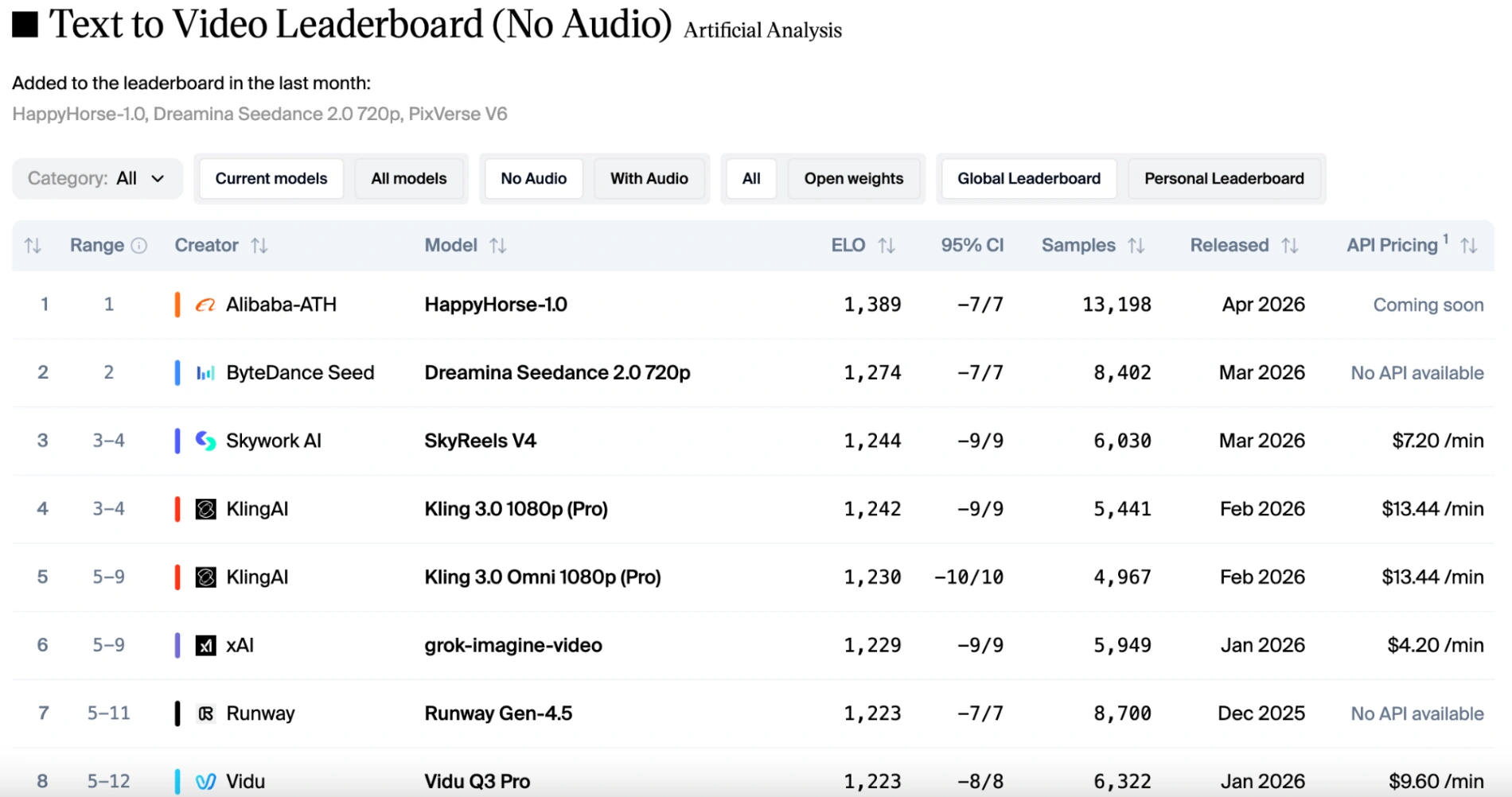

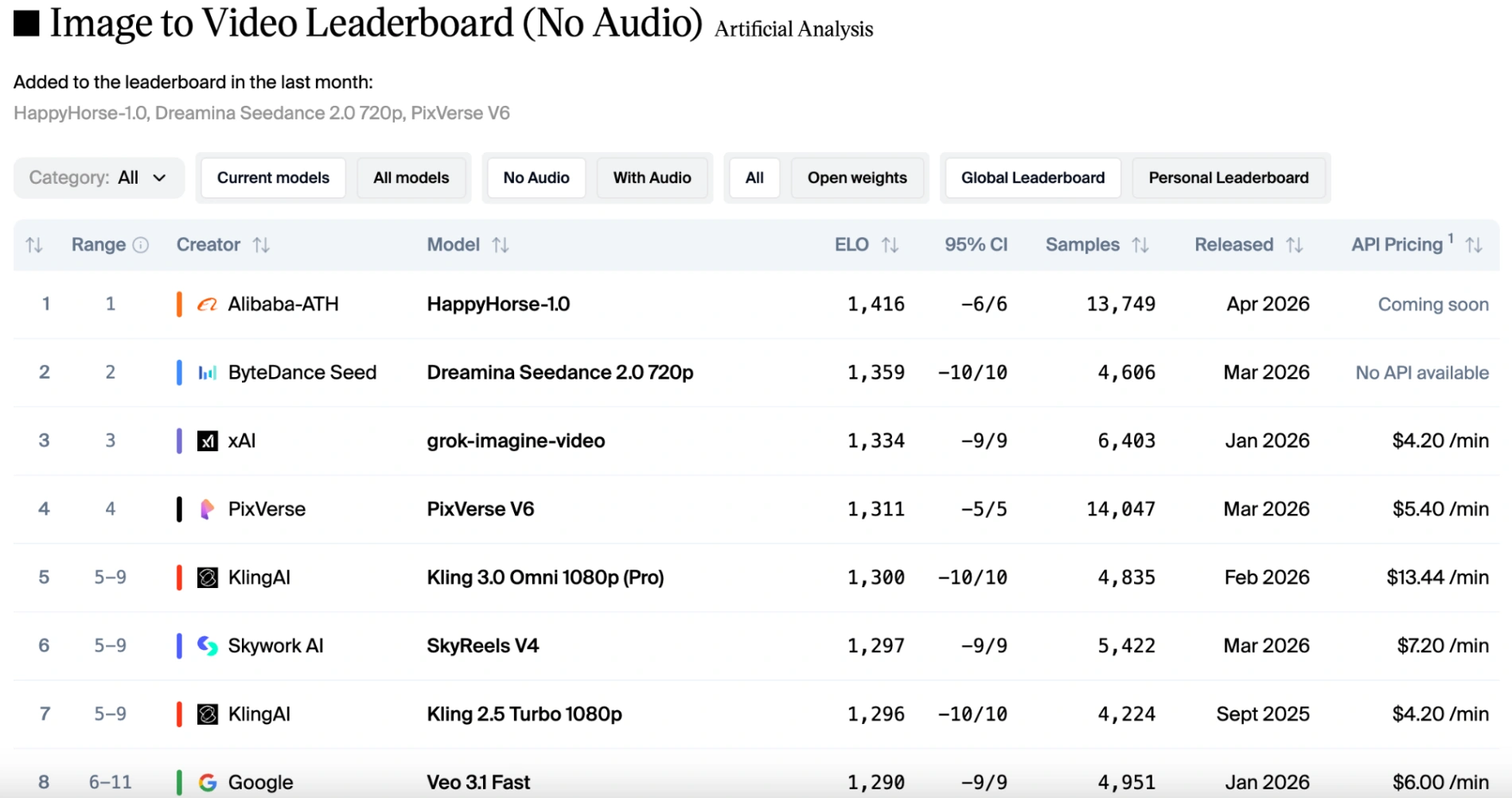

Current Rankings: Where HappyHorse Stands

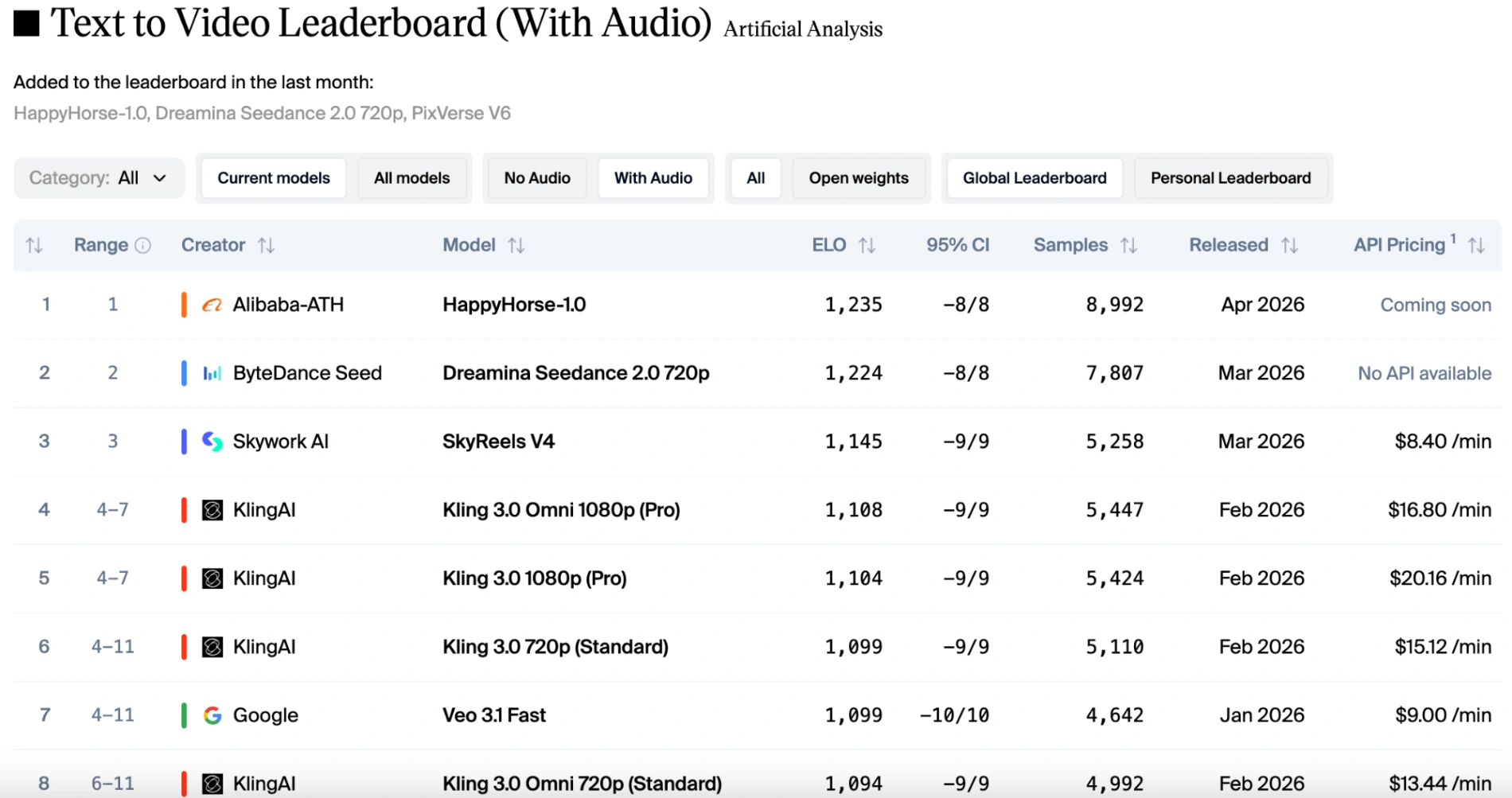

These are the Artificial Analysis Video Arena Elo scores from mid-April 2026. Scores shift daily — this is a snapshot.

The key takeaway: HappyHorse dominates in pure visual quality (95-point Elo lead in text-to-video). In the with-audio category, it's basically neck-and-neck with Seedance 2.0.

HappyHorse vs Seedance vs Kling vs Veo — Side by Side

This comparison runs the same prompts through HappyHorse, Seedance 2.0, Kling 3.0, and Veo 3.1 Lite side by side:

If you want to run your own blind test, the Artificial Analysis Video Arena lets you vote on unlabelled clips from every major model. That's the pool HappyHorse earned its lead on in the first place.

Quick summary of what consistently stands out when HappyHorse is pitted against the others in the Arena:

- HappyHorse — Best lighting, skin tones, and overall visual polish. Strongest prompt adherence for camera movements.

- Seedance 2.0 — Best multi-character handling and multimodal reference control. Slightly better audio in some clips.

- Kling 3.0 — Best for longer content (up to 2 min) and 4K output. Strongest multi-shot consistency.

- Veo 3.1 — Best all-rounder for prompt adherence and natural motion. Native 4K.

The Backstory: Anonymous Entry, Alibaba Reveal

The Video Arena is a blind test — users see two clips side by side, no model names, and vote for whichever looks better. On April 7, "HappyHorse" was submitted anonymously. Both a V1 and V2 version appeared. Within hours, it passed everything on the board.

Nobody knew who made it. Three theories circulated: a rebranded Alibaba Wan 2.7, a secret ByteDance experiment, or an unknown lab building credibility through blind-test data. On April 10, Alibaba confirmed it was theirs. Their Hong Kong stock rose 2.12% on the news.

The person behind it: Zhang Di — the "father of Kling." He built Kling at Kuaishou, then returned to Alibaba in November 2025 and built HappyHorse in months. The model that dethroned Kling on the leaderboard was built by the same person who created Kling.

HappyHorse Release Date and API Access

- April 7, 2026 — Anonymous appearance on Artificial Analysis Video Arena

- April 10, 2026 — Alibaba publicly claims ownership

- April 30, 2026 (confirmed) — API launch via exclusive third-party provider

- Late April / May 2026 (expected) — Open-source weights (Apache 2.0) on HuggingFace and GitHub

No public pricing yet, but Chinese AI video APIs typically run around $0.04 per second — significantly cheaper than Western alternatives like Runway ($0.25+/second on Pro plans).

The open-source release will include the full 15B model, a faster distilled variant, and a super-resolution module — all under Apache 2.0 (commercial use permitted). As of this writing, GitHub and HuggingFace still show "Coming Soon."

How to Use HappyHorse

Luno Studio integrates every major AI video model into a single platform with a built-in timeline editor — so you don't need to bounce between five different tools to go from prompt to finished video.

When HappyHorse's API goes live on April 30, we're adding it to Luno's model roster. That means:

- Generate with HappyHorse alongside Seedance 2, Kling 3, Veo 3.1, and 10+ other models

- Compare outputs side by side — run the same prompt through HappyHorse and Seedance to see which nails your scene

- Edit directly in the timeline — trim, sequence, layer, and add transitions without leaving the platform

- Native audio drops in synced — HappyHorse's joint audio-video output lands in the timeline with audio already aligned

Create a free Luno account to get access on day one.

HappyHorse vs Higgsfield, OpenArt, and Freepik

If you're comparing AI video platforms, here's where HappyHorse sits relative to the other tools people are evaluating.

vs Higgsfield

Higgsfield aggregates AI video models into one platform — it's a wrapper, not a model. In early 2026, Higgsfield ran into trust issues: its X/Twitter account was suspended in February over creator payment disputes, and users complained that "unlimited" plans only covered the lowest-quality model. A 5-second Seedance 2.0 clip through Higgsfield costs roughly $0.50–$1.20.

HappyHorse outperforms Higgsfield's best model (Seedance 2.0) by 95 Elo points in visual quality. Platforms like Luno Studio offer the same multi-model access — plus a professional editor — without the pricing and trust issues.

vs OpenArt

OpenArt is an image-first platform that expanded into video. Its strength is image workflows — ControlNet, inpainting, style transfer. It doesn't train its own video models. If video is your primary need, a purpose-built video platform with frontier models like HappyHorse will give you better results.

vs Freepik

Freepik is a stock asset marketplace with AI generation bolted on. Its video features use third-party models (Kling, among others) wrapped in Freepik's UI. Decent for quick social content, but if you need cutting-edge output quality, you should be using the models directly through a platform built for video production.

The Bottom Line

HappyHorse 1.0 is the most impressive AI video model to drop in 2026 — and it did it by showing up anonymously and winning on merit before anyone knew who made it. The visual quality is a genuine step ahead. The joint audio-video generation is the most convenient in the industry. The team has a proven track record.

The catch: you can't use it yet. API launches April 30. Open-source weights are coming. Until then, Seedance 2.0 and Kling 3.0 are your best options — and both are available in Luno Studio right now.

We'll update this post when HappyHorse goes live. Sign up free to be first in line.

Sources

- Artificial Analysis — Text-to-Video Leaderboard — Live Elo rankings and blind-test methodology for AI video models.

- Artificial Analysis — Image-to-Video Leaderboard — Image-to-video Arena rankings including HappyHorse scores.

- Bloomberg — "Stealth Alibaba Video AI Model Tops Global Ranking on Debut" — Breaking coverage of HappyHorse's anonymous leaderboard appearance and Alibaba's reveal.

- Bloomberg — "Alibaba's Happy Horse AI Model Gives China the Video-Creation Crown" — Analysis of HappyHorse's impact on the global AI video landscape.

- CNBC — "Alibaba Revealed as Creator of AI Video Generation Model HappyHorse-1.0" — Alibaba's public acknowledgment and benchmark reveal details.

- South China Morning Post — "Alibaba's HappyHorse Tops Seedance, Offering Glimpse into China's Race for AI Talent" — Zhang Di's background, the Kling connection, and China's AI talent wars.

- fal.ai — HappyHorse-1.0 Official API Provider Page — Confirmed API launch details and technical specifications.

- HappyHorsesAI.com — Official HappyHorse Showcase — Demo videos, leaderboard screenshots, and team information from Alibaba's ATH division.

- Artificial Analysis on X — HappyHorse Reveal Thread — Official announcement confirming HappyHorse as Alibaba's model with Arena placement data.

- Justine Moore on X — HappyHorse First Impressions — Independent testing noting multi-shot capabilities and prompt adherence.

- Apiyi.com — "HappyHorse Model Decryption: The AI Video Dark Horse" — Detailed timeline of the anonymous submission, disappearance, and origin theories.

- WaveSpeed AI — "What Is HappyHorse-1.0?" — Technical breakdown including architecture claims, Elo verification, and open-source status assessment.

- RoboRhythms — "Alibaba's HappyHorse AI Video Generator Just Topped the World" — Competitive analysis, Sora discontinuation context, and pricing projections.

Benchmark scores reflect mid-April 2026 data and shift daily. This article will be updated as HappyHorse's API launches and open-source weights become available.